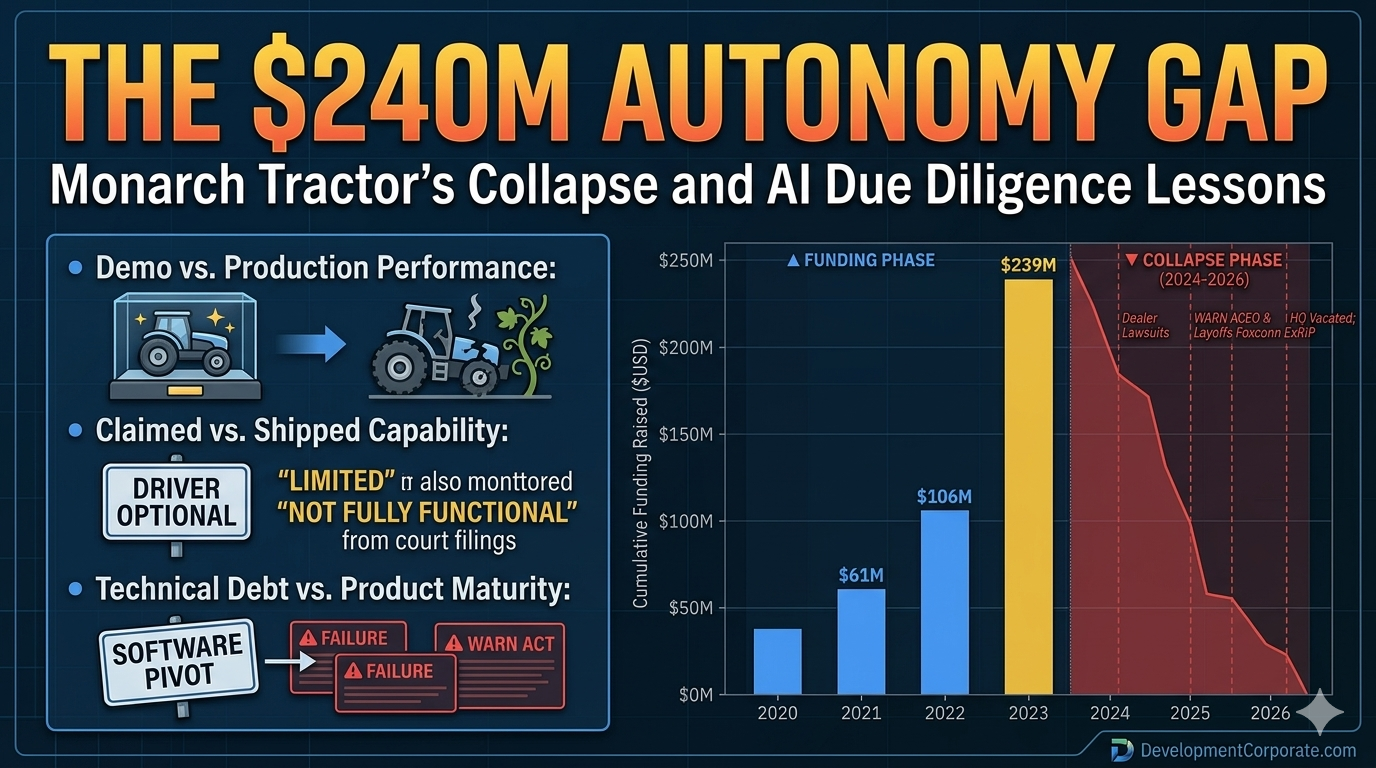

The $240M Autonomy Gap: What Monarch Tractor’s Collapse Teaches Every AI Due Diligence Team

| 📌 EXECUTIVE SUMMARYMonarch Tractor raised $240M, reached a $518M valuation, and burned through all of it.Its autonomous AI tractors failed in real-world deployments — creating lawsuits, layoffs, and closure.The same “Autonomy Gap” pattern is present in enterprise software AI deals being evaluated right now.This article delivers a four-stage due diligence framework to identify and price that gap before close. |

AI startup due diligence has a new case study — and it didn’t emerge from a software stack or a venture pitch deck. It came from a vineyard in California, where a $518M-valued startup just burned through a quarter billion dollars building tractors that couldn’t drive themselves.

The consensus take is predictable: another overhyped startup that couldn’t execute. The M&A take is more useful: Monarch Tractor is a live autopsy of the Autonomy Gap — the measurable distance between what an AI system claims to do and what it actually does in production.

That gap doesn’t just destroy hardware companies. It destroys software valuations, voids IP moats, and generates post-close liability that no rep-and-warranty policy was designed to cover. If your deal team isn’t measuring the autonomy gap in every AI-native target you evaluate, you are repeating the mistake that incinerated $240M in investor capital.

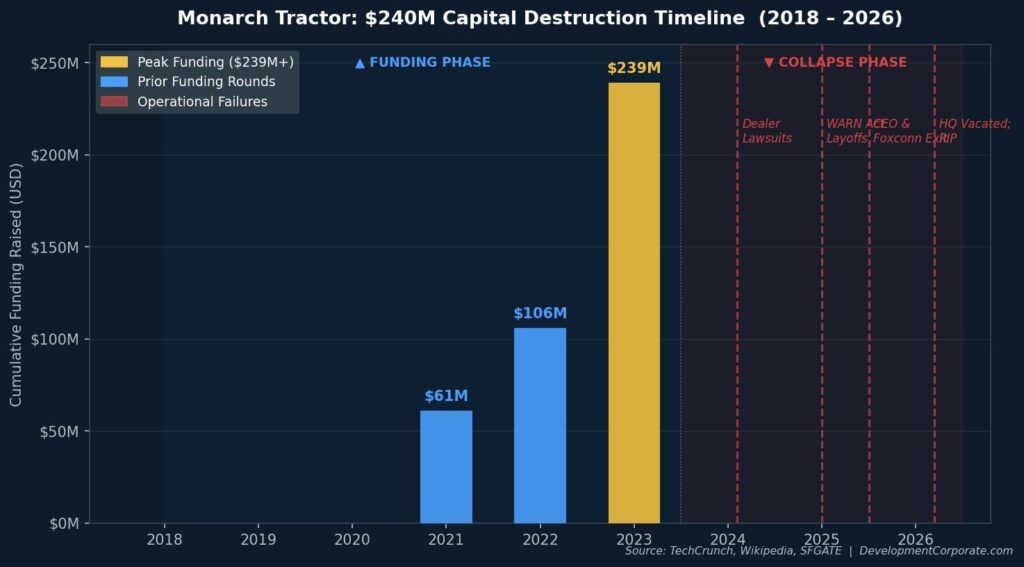

Figure 1: Monarch Tractor Capital Destruction Timeline, 2018–2026. Source: TechCrunch, Wikipedia, SFGATE.

What the Monarch Collapse Actually Reveals

The standard narrative frames Monarch as a hardware startup that couldn’t scale. That framing misses the point.

Monarch Tractor was founded in 2018 by a team that included the executive who oversaw construction of Tesla’s Gigafactory in Nevada and Carlo Mondavi of the iconic Mondavi winemaking family. Time named the MK-V one of its best inventions of 2023. Fast Company placed Monarch on its 2024 list of most innovative companies in agriculture. This was not a garage project. It was a credentialed, well-funded, media-validated venture with $518M in enterprise value.

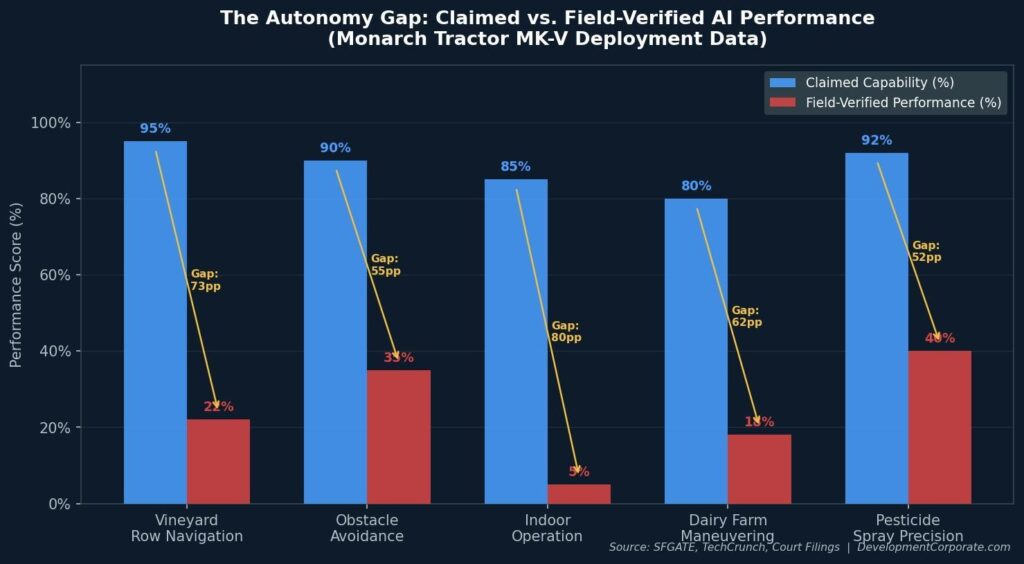

California winemaker Patrick O’Connor, who tested the tractor for three years on his vineyard, delivered a blunt verdict in a recent Instagram video that accumulated over 25,000 likes: “It totally failed.” More pointedly, he called it “actually quite dangerous” — and described a quarter billion dollars as having been “wasted” on a “failed autonomous AI robot tractor.” His specific complaints: finicky hydraulics, an automated row-follow system that did not work, and machines that kept hitting his vines.

O’Connor’s tractor now serves as a $200,000 log splitter. Multiply that across roughly 500 shipped units and you have the commercial reality that $240M in VC capital was unable to fix.

Court records are equally damning. Idaho dealership Burks Tractor paid Monarch $773,088 for ten tractors and is still paying interest on the financed purchase. Monarch’s own sales team acknowledged in writing that the tractors’ autonomy was “limited” and they were unable to function autonomously indoors.

| ⚠️ Key Signal for Deal TeamsMonarch’s sales team knew. They acknowledged capability limitations in writing while the company continued to market the product as “driver-optional.”That is not a product failure. That is a disclosure failure — the type that generates legal liability, destroys customer trust, and makes a software licensing pivot an act of desperation rather than strategy. |

The Autonomy Gap Is a Software Problem, Not a Hardware Problem

Here is the contrarian thesis: Monarch Tractor’s failure is not an agricultural robotics story. It is an AI product validation story — and the same failure pattern is present in enterprise software deals being evaluated right now.

The Autonomy Gap has three measurable components in every AI-native product:

1. Demo Performance vs. Production Performance

Every AI system performs better in controlled conditions than in real-world deployments. Monarch’s tractors worked in demonstrations. They failed in fields, barns, and narrow vineyard rows. In enterprise software, the equivalent is an AI feature that performs well on curated datasets during due diligence but degrades on messy, real-world customer data after close.

2. Claimed Capability vs. Shipped Capability

Monarch marketed its machines as “driver-optional.” Dealers purchased tractors based on showcase videos depicting fully autonomous capabilities. Court documents show the units either malfunctioned or entirely lacked the capability to perform autonomous work. In enterprise SaaS, the equivalent is an AI roadmap item marketed as current functionality.

3. Technical Debt Masquerading as Product Maturity

As late as November 2025, CEO Praveen Penmetsa told TechCrunch that over 70% of Monarch’s revenue had shifted to software licensing — framing the collapse as a strategic pivot. The WARN Act notice covering 89 employees told a different story. Founders under pressure reframe failures as pivots. Buyers who accept that framing without independent technical validation are absorbing the liability.

Figure 2: The Autonomy Gap — Claimed vs. Field-Verified AI Performance Across Key MK-V Capability Areas. Source: SFGATE, TechCrunch, Court Filings.

Three Audience Implications

For PE/VC Investors: The Valuation Bubble Has a Technical Address

Research by Teja Kusireddy, who reverse-engineered 200 AI startups, found that 73% of venture-funded AI companies are not building original technology — they are orchestrating public APIs behind polished interfaces. Monarch’s version of that problem was different in form but identical in structure: the AI capability being sold didn’t exist at the level claimed.

According to PitchBook data, AI startups received 53% of all global venture capital in the first half of 2025 — jumping to 64% in the United States — despite comprising only 29% of all funded startups globally. When capital is this concentrated, the pressure to fund bold narratives is intense. Monarch raised $133M in 2024 — a year in which dealers were already complaining that tractors couldn’t function autonomously.

The due diligence implication is direct: autonomous capability claims require field validation, not demo validation. For hardware, that means customer site visits, not factory tours. For software, it means production system access, not sandbox demonstrations.

| 💼 Investor Due Diligence QuestionIf the claimed AI feature has never shipped to a paying customer in uncontrolled conditions for 90+ days, treat it as a roadmap item — not a product feature. Re-price accordingly.Apply the forcing function from our AI SaaS investment trends analysis: assume a $5M seed-funded AI-native team. Could they replicate the core AI functionality in 6–9 months? If yes, there is no durable moat. |

For SaaS Founders: The Demo-to-Deployment Gap Is Your Liability

Monarch’s founders were not operating in bad faith. They believed in the technology. But they sold capability the product hadn’t achieved, took funding against that narrative, and let the gap between claimed and actual performance accumulate past the point of recovery.

If your AI product has a demo-to-deployment gap — features that perform in controlled settings but degrade in customer environments — you are accumulating a liability that will surface in one of three ways: customer churn, legal action, or diligence repricing.

Specific questions founders should answer before beginning an exit process:

- Can you demonstrate autonomous capability in a customer’s production environment, not your own demo environment?

- Do your customer contracts accurately describe current AI capabilities vs. roadmap items?

- Do you have written documentation from customers confirming AI performance against agreed benchmarks?

- Is there any written internal or external acknowledgment that a claimed AI capability is “limited” or “not yet fully functional”?

| ⚠️ Founder WarningDiscovery finds everything. Burks Tractor’s lawsuit rested partly on written internal admissions that autonomy was “limited.” In a SaaS context, that’s a Slack message, a support ticket, or a customer email.Close the demo-to-deployment gap before entering a sale process — not during it. |

For Enterprise CTOs: “Driver-Optional” Is AgTech for “AI-Powered”

“Driver-optional” is a framing that implies current autonomous capability while technically preserving the option for human operation. That framing is structurally identical to the “AI-powered” label now attached to virtually every enterprise SaaS product on the market.

For CTOs evaluating vendor relationships — particularly for AI tools embedded in mission-critical workflows — the Monarch framework applies directly:

- Require production reference calls, not vendor-selected case studies. Talk to customers who have deployed the AI feature in uncontrolled conditions for more than 90 days.

- Test AI capability in your own environment, not the vendor’s demo environment. The gap between these two performance profiles is the Autonomy Gap.

- Demand SLA commitments on AI-specific performance metrics. If a vendor will not commit to AI accuracy, recall, or autonomy rates in contract, treat that as a signal the capability is not production-grade.

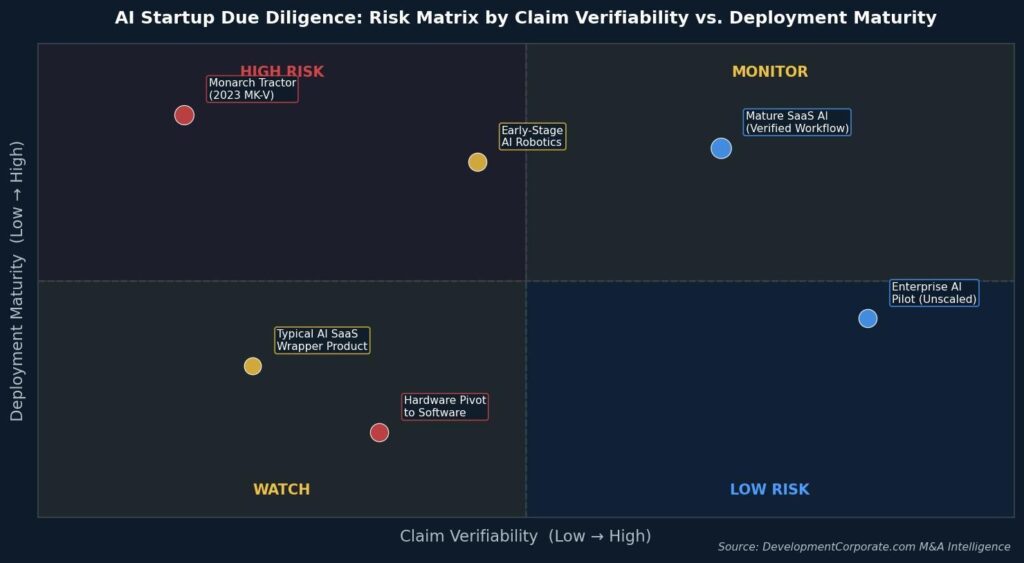

Figure 3: AI Due Diligence Risk Matrix — Claim Verifiability vs. Deployment Maturity. Source: DevelopmentCorporate.com M&A Intelligence.

The Pivot to Software: When the Hardware Fails, the Narrative Shifts

One detail in the Monarch story deserves specific attention for M&A practitioners. As the hardware business collapsed, management proposed a pivot to software licensing — marketing a product called “Monarch One,” described as a platform for autonomous off-highway equipment. The internal memo framed the WARN Act layoffs as part of a transition to “revenue-generating segments.”

This is a pattern, not an anomaly. When an AI product fails to deliver on its core autonomous capability, the recovery narrative almost always involves a pivot to software or data licensing. The rationale is appealing: the company has accumulated real-world operational data, even if the autonomous capability didn’t work. That data has value. The platform can be licensed to OEMs.

As our analysis of AI training data due diligence established, the standard acquirer question — “Does the company own or have licensed rights to its training data?” — is insufficient. The better question is what downstream derivative uses those licenses permit, and whether those uses create legal, regulatory, or reputational exposure.

For any target attempting a hardware-to-software pivot after AI capability failure, add these questions to standard diligence:

- What is the provenance of the operational data the software platform will be trained on? Data collected during failed autonomous deployments may carry contractual limitations on reuse.

- Does the software licensing model depend on IP that is currently subject to litigation? Monarch’s autonomy IP is directly implicated in multiple active lawsuits.

- Has any customer in the new software licensing model received a production deployment, or is all revenue based on pre-commercial agreements?

| 🔍 M&A Practitioner Note: The pivot narrative is not inherently false. But it should be verified with the same rigor as the original hardware claim. Because the underlying problem — a gap between claimed and actual AI capability — has not changed. Only the label has. |

The Four-Stage Autonomy Gap Assessment

Whether you are evaluating an agricultural robotics company, an enterprise AI SaaS platform, or any target where autonomous AI capability is a core value driver, the following framework surfaces the Monarch-type risks that standard diligence misses.

Stage 1 — Capability Documentation Review

- Request all written representations made to customers, dealers, or partners regarding autonomous AI performance.

- Search internal communications (Slack exports, email) for any written acknowledgment of capability limitations.

- Compare marketing materials, sales decks, and contracts against technical documentation for consistency.

Stage 2 — Production Deployment Validation

- Identify customers with the longest production deployment of the autonomous AI feature.

- Conduct site visits or live system access in the customer’s environment, not the vendor’s.

- Request raw performance logs for the AI feature — not summary dashboards or curated case studies.

Stage 3 — Legal Exposure Mapping

- Identify all active or threatened litigation related to AI capability claims.

- Review all warranty, indemnification, and limitation-of-liability provisions for AI-specific performance.

- Assess whether any legal counsel has withdrawn from representation — a significant signal of company instability, as occurred with Monarch.

Stage 4 — Pivot Narrative Stress-Testing

- If the company is pivoting from hardware or product to software or licensing, assess whether the software product has independently verified customers separate from the original deployment base.

- Model the software licensing revenue without assuming the failed hardware customer base converts.

- Verify the IP underlying the pivot is not currently encumbered by litigation or third-party license claims.

Conclusion: The Harvest That Never Came

Monarch Tractor is not primarily a story about tractors. It is a story about the distance between what AI systems are said to do and what they actually do — measured in the field, by real users, in conditions that no demo was designed to replicate.

The company raised more than $240M in venture capital against a $518M valuation. It shipped approximately 500 tractors. It received industry awards from Time and Fast Company. And it is now auctioning its R&D equipment while its attorneys withdraw from active litigation.

The M&A market is full of companies raising capital against autonomous AI narratives that have not been validated in production. The Monarch case provides a clear diagnostic: demand production evidence, not demo performance. Map legal exposure to capability claims, not just to IP ownership. Stress-test every pivot narrative against independently verified revenue.

The Autonomy Gap is always measurable. The question is whether you measure it before the deal closes — or after.