The Expert Trap: Why AI Hallucinations Are Most Dangerous When Your Team Knows Better

AI hallucination enterprise risk has a new test case — and it is one that should concern every acquirer, investor, and enterprise executive relying on AI-assisted analysis in 2026.

Peter Vandermeersch is not a naive AI user. He served as chief executive of Mediahuis Ireland, one of Europe’s largest independent news publishers, overseeing titles including the Irish Independent and Sunday Independent. Before that, he spent nine years as Editor-in-Chief of NRC, a Dutch newspaper of record. He has written extensively and publicly about the risks of AI in journalism. He has warned colleagues, specifically and repeatedly, about the danger of LLM hallucinations.

Last week, NRC published an investigation revealing that 15 of Vandermeersch’s 53 Substack blog posts contained fabricated quotes — attributed to real people who confirmed they never made the statements. Seven of those individuals have spoken on the record. Mediahuis has suspended him. The admission, published under the title “I Am Admitting My Mistake,” reads not as a confession of ignorance but as something far more unsettling: a confession of overconfidence.

| “Even I — with all my years of experience and knowledge — fell into the trap of hallucinations. I summarized reports using AI tools and worked from those summaries, trusting they were accurate.” — Peter Vandermeersch, March 2026 |

The prevailing AI governance narrative holds that experience and AI literacy are the primary defenses against hallucination risk. Train your team. Hire people who understand the technology. Deploy experts who know to verify outputs. The Vandermeersch case is a direct empirical challenge to that assumption — and it has significant implications for M&A due diligence, portfolio risk assessment, and enterprise AI adoption decisions.

What the Vandermeersch Case Actually Reveals

The details of this incident are worth examining precisely because they do not fit the standard AI failure narrative.

Vandermeersch did not use consumer-grade AI carelessly. He used tools — ChatGPT, Perplexity, and Google Notebook — that are among the most widely deployed in enterprise and professional settings. He was not writing fiction or producing marketing copy. He was writing analytical commentary on journalism, media policy, and AI governance. These are topics he has spent decades covering professionally.

And the failure mode was not hallucinating obscure data points. It was fabricating quotes — plausible-sounding, contextually appropriate statements attributed to named individuals. The kind of output that a subject-matter expert reading quickly would find credible, and not flag for verification.

His own admission identifies the mechanism precisely:

| “These language models are so good that they produce irresistible quotes you are tempted to use as an author. Of course, I should have verified them.” |

This is the core dynamic that M&A practitioners, PE investors, and enterprise leaders need to internalize. The hallucination problem is not primarily a literacy problem. It is a fluency problem. The more polished and contextually appropriate an AI-generated output appears, the less likely a qualified reader is to apply friction to its verification.

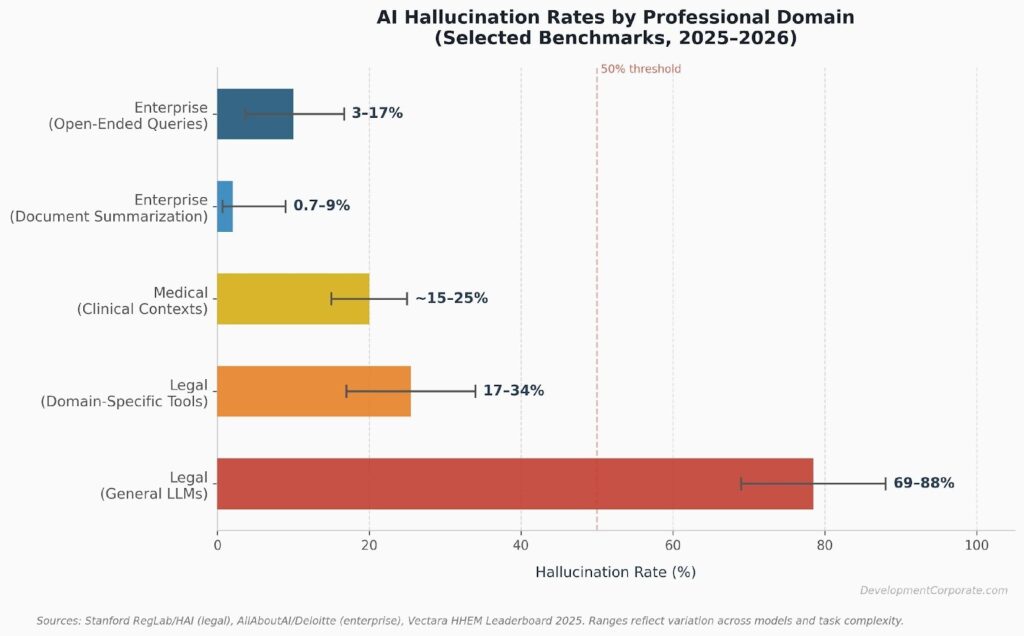

Figure 1: AI Hallucination Rates by Professional Domain (2025–2026). Sources: Stanford RegLab/HAI, Vectara HHEM Leaderboard, AllAboutAI/Deloitte.

The Expertise Paradox: Why Experience Does Not Protect Against AI Hallucinations

There is a structural reason why expertise amplifies rather than reduces hallucination risk in certain workflows, and it has direct implications for how acquirers should evaluate AI governance claims at target companies.

When a junior analyst uses an LLM to summarize a contract or research report, there is a natural workflow forcing function: they do not have the domain knowledge to trust the output without checking it against the source. Their inexperience creates verification friction.

An expert operates differently. A senior investment professional reviewing an AI-generated competitive analysis does not start from scratch with each claim. They pattern-match against their existing knowledge. When an output aligns with their priors — when it sounds right — the cognitive cost of verification is high and the perceived benefit is low. The expert’s confidence becomes a liability.

| The Expert Trap: Three MechanismsFluency trust: AI outputs calibrated to sound authoritative are more convincing to experts who recognize the vocabulary and framing. Vandermeersch noted that the hallucinated quotes were “irresistible” — they read the way sources in his domain actually speak.Prior alignment: Experts verify claims that surprise them. They do not routinely verify claims that confirm what they already believe. An AI hallucination that supports an existing hypothesis passes through expert review intact.Velocity pressure: Experienced professionals are high-throughput operators. They use AI to accelerate workflows. Checking every source undermines the efficiency gain — so verification shortcuts are rationalized. |

MIT research published in 2025 adds a critical data point: when AI models hallucinate, they are 34% more likely to use confident, definitive language — phrases like “certainly,” “without doubt,” “definitively.” The model’s confidence is inversely correlated with its accuracy. Experts, trained to recognize confident expert language as a signal of reliability, are particularly susceptible to this dynamic.

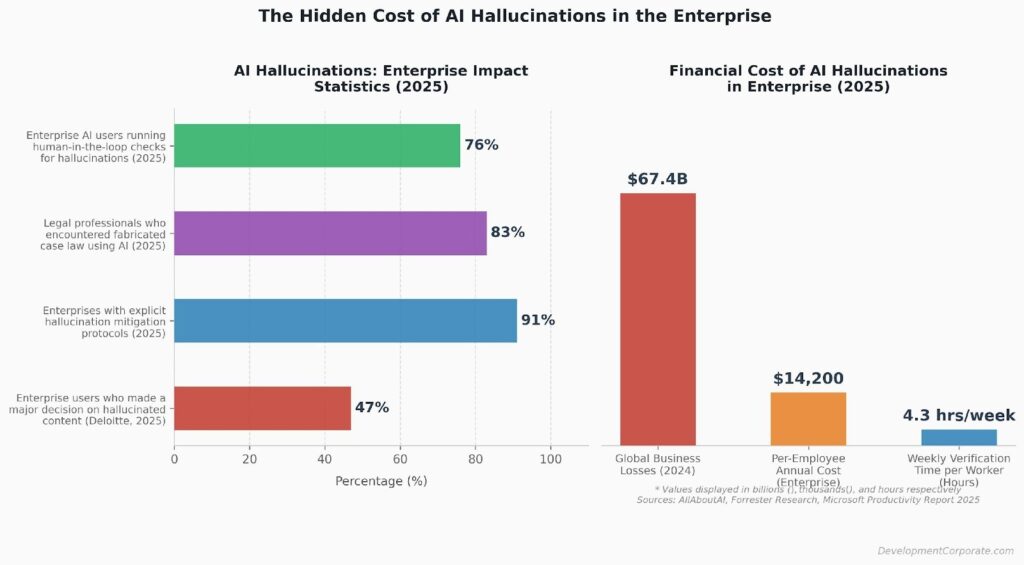

This is not a hypothetical concern. A Deloitte survey from 2025 found that 47% of enterprise AI users made at least one major business decision based on hallucinated content. These are not naive users experimenting with AI tools for the first time. They are professionals deploying AI in operational workflows.

The M&A Due Diligence Implications No One Is Talking About

For acquirers conducting due diligence on enterprise SaaS targets in 2026, the Vandermeersch case crystallizes a category of risk that is systematically underweighted in current diligence frameworks.

AI-assisted analysis is now embedded throughout the enterprise M&A workflow — in deal sourcing, competitive intelligence, customer reference synthesis, management interview summaries, and market sizing. In many cases, those AI-assisted outputs are not footnoted as AI-generated. They are presented as analysis.

The Fabricated Data Room Problem

Consider what a hallucinated output looks like in a target company’s data room. A management team that uses ChatGPT or Perplexity to draft sections of their CIM, compile customer testimonials, or summarize market research may have unknowingly embedded fabricated claims into materials that an acquirer will treat as verified facts. The hallucinated content does not announce itself. It reads exactly like the surrounding accurate content.

As we have analyzed in our LLM hallucinations in SaaS competitive analysis research, a single fabricated funding round, misreported integration, or invented customer quote can distort strategic positioning and result in costly post-close surprises. The probability compounds when the target’s team is experienced and AI-confident — precisely the teams acquirers prefer.

AI Productivity Claims Built on Hallucinated Benchmarks

A second diligence risk: targets that claim measurable AI-driven productivity gains — faster release cycles, reduced support costs, improved NRR — may be presenting metrics derived from AI-generated analysis. If those analyses contained hallucinated benchmarks or fabricated comparisons, the productivity narrative collapses under scrutiny.

As we noted in our analysis of Meta’s AI productivity claims, the gap between AI narrative and verifiable operational data is where real M&A risk concentrates. The Vandermeersch case provides a direct parallel: even expert practitioners who believe they are accurately representing AI-derived insights may be transmitting hallucinations.

The “Human Oversight” Checkbox Is Not a Control

Most enterprise AI governance policies, and most acquirers’ diligence checklists, treat “human review of AI outputs” as an adequate control. Vandermeersch himself was the human review. He was not bypassing oversight — he believed he was exercising it. His admission was explicit: “The necessary ‘human oversight,’ which I consistently advocate, fell short.”

This matters enormously for how enterprise AI security due diligence frameworks need to evolve. Documenting that a target company has a human review policy is not the same as verifying that the review process is structurally capable of catching hallucinated content. The Vandermeersch case demonstrates that a world-class subject matter expert, applying genuine attention, can fail this test.

Figure 2: The Business Cost of AI Hallucinations in Enterprise Settings (2025). Sources: Deloitte, Forrester Research, Microsoft Productivity Report.

What Acquirers and Investors Should Do Differently

The Vandermeersch case does not argue for banning AI in due diligence or portfolio operations. It argues for replacing naive oversight assumptions with structural verification controls. Here is what that looks like in practice.

Upgrade Your AI Governance Diligence Questions

Standard diligence questions about AI usage — “Do you use AI in product development?” “Do you have an AI policy?” — are insufficient. The questions that matter are structural:

- Which specific AI tools are used in preparing investor materials, CIMs, customer references, and board reporting?

- What is the source verification workflow for AI-assisted analysis? Is it documented?

- Has the company audited previously published AI-assisted analysis for hallucinated claims?

- Are AI governance policies designed around output quality, or around legal compliance?

Apply Source Verification to Key Data Room Claims

Any significant factual claim in a target’s data room — a customer quote, a market sizing figure, a competitive comparison, a productivity benchmark — should be traced to a primary source. As we outlined in our AI hallucination validation framework, the “Validation Sandwich” approach — anchoring AI-generated outputs between real-world data points before and after — is the only reliable defense. Acquirers should apply the same logic to data room materials they did not generate themselves.

Treat Expert-Confident Teams as a Hallucination Risk Factor, Not a Mitigation

Teams that are sophisticated AI users, who are deploying AI at scale and moving fast, are the teams most likely to have hallucinated content embedded in their operational outputs without knowing it. This does not make them bad targets. It makes them targets that require different diligence than the standard AI literacy checkbox provides.

For PE investors managing portfolio risk, the same logic applies at the portfolio level. AI-assisted reporting on KPIs, competitive positioning, and operational benchmarks should be evaluated not just for what it says, but for whether its underlying sources can be verified. The AI safety implications for enterprise leaders extend beyond security — they reach into the integrity of the analytical frameworks companies use to make strategic decisions.

For SaaS Founders: Your AI-Generated Analysis May Be Your Biggest Liability

If you are a SaaS founder preparing for an exit or a fundraising process, the Vandermeersch case is a direct warning about your preparation materials.

Every section of your pitch deck, every customer case study, every market sizing argument, every competitive differentiation claim that was drafted, informed by, or summarized using an LLM should be treated as a potential hallucination vector. Not because you are careless — but because Vandermeersch was not careless, and it happened to him anyway.

The practical action is straightforward: conduct a hallucination audit of your investor materials before they go into a data room. Have someone who did not write the materials trace every specific factual claim — every quote, every metric, every citation — to a primary source. This is not about doubting your team’s expertise. It is about understanding that expertise and AI fluency do not guarantee output accuracy.

Acquirers who apply the diligence framework described above will find hallucinated content eventually. Better that you find it first. As we have noted in our AI safety and enterprise risk analysis, being able to answer hard questions before they are asked is one of the most effective ways to protect deal value.

The Vandermeersch Lesson: Expert Oversight Is Not the Same as Verified Accuracy

The most important sentence in Vandermeersch’s admission is not the one about falling into the hallucination trap. It is this:

| “It is particularly painful that I made precisely the mistake I have repeatedly warned colleagues about.” |

This is the Vandermeersch Paradox. The people most aware of AI hallucination risk — the people whose warnings have shaped enterprise AI governance policies — are not immune to the failure modes they describe. Expertise provides awareness. It does not provide protection.

For M&A practitioners, PE investors, and enterprise technology leaders, this case demands a fundamental reframing: AI hallucination risk is not mitigated by deploying more experienced people. It is mitigated by building structural verification into workflows that do not depend on any individual’s judgment, regardless of their qualifications.

The benchmark is not whether your team knows better. The benchmark is whether your process makes it impossible to act on a hallucination without catching it. In 2026, that is the only AI governance standard that holds up to scrutiny.

| Is Your AI Governance Framework Diligence-Ready?DevelopmentCorporate LLC advises enterprise SaaS founders and PE investors on AI risk frameworks, M&A due diligence, and exit preparation. If you are preparing for a transaction and want to stress-test your AI governance posture before acquirers do, contact us at developmentcorporate.com. |