AI Hallucinations in Legal Filings Are Now a Courtroom Crisis — And a Market Signal

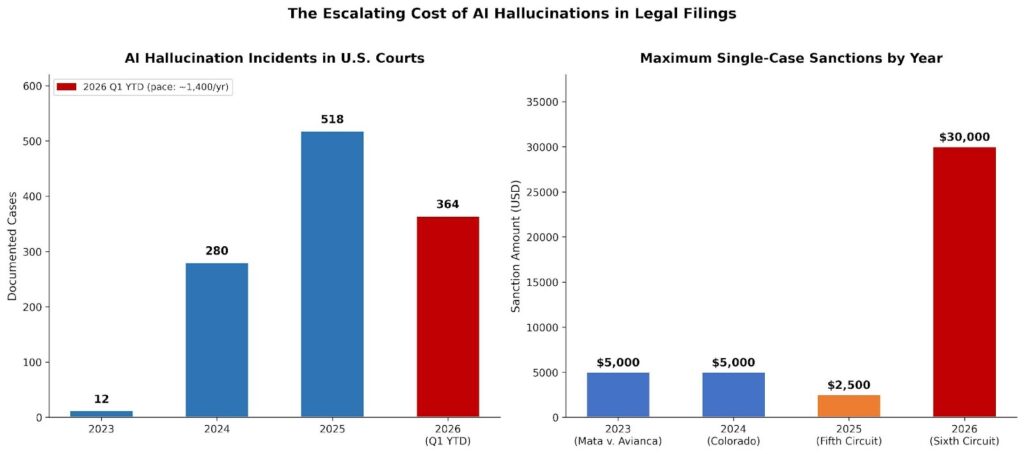

AI hallucinations in legal filings have crossed a threshold. Courts across the United States are no longer issuing polite warnings. They are sanctioning attorneys, striking briefs, revoking bar admissions, and — in the most egregious cases — issuing five-figure fines while threatening to forward cases to disciplinary boards. Researcher Damien Charlotin of HEC Paris has now catalogued over 1,174 documented incidents of AI-generated hallucinated content submitted in courts worldwide. Since the start of 2025 alone, 518 of those cases originated in U.S. courts — a number that is accelerating, not stabilizing.

The legal profession is discovering, at significant cost, what enterprise software practitioners already know: AI productivity claims and AI reliability are not the same thing. For SaaS founders, PE investors, and enterprise CTOs evaluating legal tech, this crisis is both a cautionary tale and a market signal. The judicial crackdown is creating a new compliance layer — and a new category of software that didn’t exist three years ago.

From Isolated Incident to Systemic Crisis

The landmark case that started this wave was Mata v. Avianca, Inc., decided in June 2023. Attorneys Steven Schwartz and Peter LoDuca of Levidow, Levidow & Oberman submitted a brief containing six court cases that did not exist — entirely fabricated by ChatGPT, complete with plausible-sounding docket numbers and judicial opinions. Judge P. Kevin Castel of the Southern District of New York fined both attorneys $5,000 and required them to personally notify each judge whose name appeared in the fabricated opinions. The case became required reading in legal ethics courses nationwide.

Most observers treated it as an outlier. It was not. By 2024, Law360’s AI tracker had documented 280 incidents. By close of 2025: 729+. In Q1 2026, new cases are being added weekly.

Figure 1: AI Hallucination Incidents in U.S. Courts (left) and Maximum Single-Case Sanctions by Year (right). Both metrics are trending sharply upward. Sources: Charlotin AI Hallucination Database; Law360 AI Tracker; JDJournal.

The sanctions are escalating in parallel. The first cases drew $500 fines and judicial admonishments. By late 2025, five-figure penalties had become routine. In March 2026, the Sixth Circuit Court of Appeals levied $30,000 in sanctions against two attorneys — $15,000 each — for briefs riddled with fabricated citations. The same court forwarded the matter to the chief judge for potential disciplinary proceedings.

What made that case extraordinary was the attorneys’ response. Rather than contrite apology, they accused the Sixth Circuit of “engaging in a vast conspiracy” against them. The court did not appreciate the characterization.

| Key Stat: About 19% of all documented AI hallucination incidents in U.S. courts have resulted in monetary fines. Most fines remain below $5,000 — but the maximum sanction in a single case exceeded $100,000 by late 2025, and the Sixth Circuit’s $30,000 penalty in March 2026 signals that escalation is structural, not episodic. Source: Charlotin AI Hallucination Database; Social Science Space analysis. |

The Oregon Record Fine: When “I Used AI” Is No Defense

In March 2026, the Oregon Court of Appeals issued what may be the clearest judicial statement yet on the limits of AI-as-excuse. In Doiban v. OLCC, the court discovered during oral argument preparation that the petitioner’s opening brief contained fabricated case citations and “purported quotations that do not exist anywhere in Oregon case law.” The court imposed a $10,000 fine — calculated at $500 per fabricated citation (15 total) plus $1,000 for the extra burden imposed on respondent’s counsel, who spent at least 12 hours researching the phantom cases.

The court’s language was precise and deliberately unsentimental: “An attorney who signs a brief supported in full or in part by nonexistent law submits a [violation]… The advent of generative AI did not change that principle.” Earlier Oregon decisions had explicitly rejected the term “hallucination” as too gentle. Oregon Supreme Court Justice Lagesen wrote that AI “is not perceiving nonexistent law as the result of a disorder — it is generating nonexistent law in accordance with its design.”

That framing matters for enterprise risk analysis. If courts treat AI fabrication as a product functioning as designed rather than malfunctioning, the liability defense available to law firms — and to legal AI vendors — shrinks considerably.

A Pattern Repeating Across Jurisdictions

The Oregon fine followed a string of similar rulings:

- Fifth Circuit (Feb 2026): $2,500 fine on Texas attorney Heather Hersh for AI-generated errors in a brief; the court cited her failure to disclose and “misleading” the court in aggravation.

- New Orleans (Feb 2026): $1,000 fine on attorney John Walker for an LSU Health Foundation brief containing at least 11 fabricated or mischaracterized citations. Walker used both ChatGPT and Westlaw Precision AI. “To this day it blows my mind it has that capability,” he told the court.

- Sixth Circuit (March 2026): $30,000 combined sanction for two attorneys whose brief contained over two dozen fake citations — including descriptions that “did not exist, did not include the quoted language claimed, or did not discuss or support the proposition for which [they] were cited.”

- Colorado (2024): Attorney Zachariah Crabill received a 90-day suspension — not merely a fine — after filing fabricated ChatGPT citations in a custody case and then lying to the judge about their origin.

- Morgan & Morgan (2025): Lawyers at the largest personal injury firm in the U.S. were sanctioned; the drafting attorney was fined $3,000 and had his temporary bar admission revoked.

For law firms operating across multiple jurisdictions, the compliance picture is now genuinely complex. As of early 2026, over 35 state bar associations have issued formal guidance on AI use, with requirements that vary materially: some mandate disclosure in every filing, others only upon request, and several have issued opinions that a signature on a pleading constitutes certification of accuracy regardless of tool used.

Why the Problem Is Getting Worse, Not Better

The instinctive assumption is that as lawyers become more aware of hallucination risk, incidents will decline. The data does not support this. The Charlotin database shows a trajectory moving in one direction: up. The Q1 2026 case pace implies an annualized rate approaching 1,400 documented incidents — nearly triple 2025’s volume.

Three structural dynamics explain why awareness alone is insufficient.

1. The Benchmark Gap Is Enormous

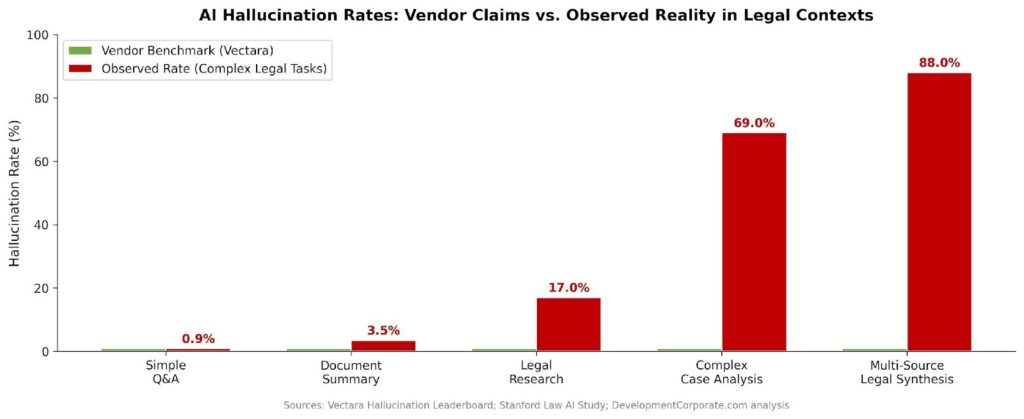

The legal AI industry’s standard marketing claim is a sub-1% hallucination rate, citing Vectara’s widely used leaderboard. That benchmark measures general-purpose output quality under controlled conditions. As DevelopmentCorporate’s analysis of hallucination rates as a due diligence crisis documented, Stanford research on complex legal queries finds observed hallucination rates reaching 69–88% on the tasks that actually matter in litigation — multi-source legal synthesis, nuanced case analysis, and jurisdiction-specific citation.

Figure 2: AI Hallucination Rates — Vendor Claims vs. Observed Reality by Task Complexity. The sub-1% benchmark measured under controlled conditions diverges dramatically from observed rates on complex legal tasks. Sources: Vectara Hallucination Leaderboard; Stanford Law AI Study; DevelopmentCorporate.com analysis.

2. The Delegation Problem Is Structural

Courts have repeatedly found that attorneys cannot delegate their Rule 11 obligations to AI. Under the Federal Rules of Civil Procedure, a lawyer who signs a pleading certifies — to the best of their knowledge — that all legal contentions are supported by existing law. That duty is non-delegable, regardless of whether the source of the error is a first-year associate or a large language model.

In the New Orleans case, three of the four sanctioned attorneys had never reviewed the AI-generated brief at all. Their signatures alone created liability. The court was explicit: co-signing a document without review is “an improper delegation of [their] duty under Rule 11.” For large firms with distributed work product — which describes virtually every Am Law 100 institution — this is a systemic vulnerability, not an individual failure.

3. The Tools That Are Supposed to Help Are Part of the Problem

Attorney John Walker used two AI tools — ChatGPT and Westlaw Precision AI — and still submitted 11 fabricated citations. Above the Law’s analysis noted that lawyers have begun blaming their legal AI research platforms for introducing errors — a dynamic it compared to “blaming a vending machine for not giving you a steakhouse dinner.” The underlying point is serious: as lawyers delegate more to AI, the mental model of AI-as-verification-tool breaks down. Using one AI to check another AI’s output does not constitute independent verification.

| Structural Risk: Courts in multiple jurisdictions have held that attorneys’ professional liability cannot be outsourced to an AI vendor. Every law firm that deploys AI-assisted legal workflows retains full malpractice exposure. Every legal AI acquisition target carries that exposure forward to the buyer. See: DevelopmentCorporate analysis of legal AI hallucinations as M&A risk. |

The Market Response: Verification SaaS as the Contrarian Play

Here is the part of this story that most coverage misses: the judicial crackdown is not just a risk story. It is a market creation event.

The volume and severity of sanctions has produced an acute, urgent demand for a product category that barely existed in 2023: AI hallucination detection and citation verification software. The most notable entrant to date is RealityCheck, launched by BriefCatch at Legalweek 2026. Its architecture is telling: it uses a two-layer verification process combining deterministic citation validation — checking reporter volumes, court identifiers, and case names against authoritative legal databases without any AI involvement — with a separate AI-assisted analysis layer that evaluates whether quoted language actually appears in the cited opinion.

The product’s design philosophy implicitly validates what courts have been saying for three years: you cannot use AI to verify AI. The deterministic layer exists precisely because AI-on-AI verification produces false confidence. That is both a sound engineering decision and a compelling sales narrative in a market where every law firm is now acutely aware of the reputational and financial exposure.

What the Gordon Rees Pattern Reveals About Enterprise Adoption

The Gordon Rees incidents are instructive. The firm — one of the largest in the United States — experienced its third hallucination-related incident in six months between October 2025 and March 2026. Three incidents at a major firm in six months is not bad luck. It is a process failure. And process failures, in enterprise software, are buying events.

When a firm of Gordon Rees’s scale has this problem three times in rapid succession, the decision to purchase a citation verification platform shifts from “interesting capability” to “compliance obligation.” Opposing counsel now has every incentive to examine every citation from a firm with a documented pattern. That creates a negative selection dynamic: the firms most visibly associated with hallucination incidents face the highest scrutiny from adversaries, creating the strongest internal pressure to implement verification tooling.

The Legal AI Verification Market: Key Dynamics

- Urgency is regulatory, not aspirational. Bar associations, courts, and malpractice carriers are all moving in the same direction simultaneously. Unlike most enterprise SaaS adoption cycles, the impetus is not ROI — it is liability avoidance.

- TAM is well-defined. Every law firm in the United States using AI tools for research or drafting is a potential buyer. That is not a speculative market — it is a provably active one with documented, escalating consequences for non-compliance.

- The deterministic verification layer is a moat. Products that combine non-AI validation against authoritative legal databases with AI analysis are architecturally superior to AI-only approaches. The legal databases (Westlaw, LexisNexis) have data that general-purpose LLMs do not reliably reproduce — and access to those databases is controlled.

- Integration creates switching costs. A citation verification tool embedded in the document review workflow — rather than a standalone check — builds the kind of workflow ownership that generates durable NRR.

For SaaS investors, the verification segment maps cleanly onto the characteristics that institutional capital is currently prioritizing. As DevelopmentCorporate’s analysis of AI SaaS investment trends documented, the funding categories attracting real VC attention share a common thread: depth, switching costs, and proprietary assets. Legal citation verification has all three.

Due Diligence Implications: What Buyers Must Ask

For M&A practitioners evaluating legal tech targets, the hallucination crisis creates a specific, actionable due diligence requirement. The questions below apply equally to standalone legal AI companies and to enterprise SaaS targets that have embedded AI into legal or compliance workflows.

For Legal AI Acquisition Targets

- What is the product’s documented hallucination rate on jurisdiction-specific citation tasks — not on general benchmarks? Ask for test results, not Vectara screenshots.

- Does the verification architecture include deterministic (non-AI) validation against authoritative legal databases? If the only verification layer is AI-based, the product inherits the same credibility problem it claims to solve.

- What malpractice or errors-and-omissions exposure has the target accrued from customer incidents? Are there any pending claims or bar complaints traceable to output quality?

- How does the target’s product handle the multi-jurisdiction disclosure requirement variance? Firms operating in multiple states face materially different obligations — a product that doesn’t surface these differences is creating compliance risk, not mitigating it.

For Any Target Using AI in Legal or Compliance Functions

- Has the target reduced legal or compliance headcount in reliance on AI-driven efficiency claims? If so, does that headcount reduction create undisclosed liability exposure?

- Are any representations and warranties in the target’s enterprise contracts supported by AI-generated output that has not been independently verified? This is a specific R&W exposure vector in legal tech and compliance SaaS deals.

- Has R&W insurance underwriting specifically asked about AI hallucination risk? Proactively address this in diligence rather than discovering it at the insurance stage.

As DevelopmentCorporate’s M&A due diligence checklist notes, legal and regulatory compliance review is the due diligence component most likely to surface deal-breaking liabilities that financial analysis misses. In 2026, AI reliability is a new mandatory column in that review.

| For SaaS Founders: If your product integrates AI into any legal or compliance workflow, commission a third-party hallucination audit on your domain-specific use cases before you enter an acquisition process. Buyers who discover this risk in diligence will use it to justify a discount. Founders who surface it proactively — with remediation already underway — protect their valuation. |

What Courts Are Actually Saying to the Market

Strip away the individual sanctions and the headline fines, and courts across multiple jurisdictions are communicating a consistent message that has direct commercial implications:

First, AI output is not evidence. Courts treat AI-generated citations as unverified assertions — the attorney’s responsibility to make them real, not the AI’s responsibility to have gotten them right. This frames the legal AI vendor’s product as a drafting aid, not a research authority — with corresponding limits on the vendor’s liability and the attorney’s reliance justification.

Second, the duty of competence now explicitly includes AI literacy. The American Bar Association’s Formal Opinion 512 (July 2024) stated plainly that competence includes understanding the “capabilities and limitations” of AI systems before deploying them in practice. This is a purchasing criterion, not just an ethics position: law firms that cannot demonstrate AI governance processes are accumulating professional liability exposure.

Third, escalation is deliberate. As the Fifth Circuit observed earlier this year, there is “no end in sight” to AI-fabricated results appearing in legal filings. The same court noted that sanctions alone are insufficient deterrent. The implication is that penalties will continue to increase until the behavioral incentive changes — and the behavioral incentive changes when verification becomes a standard workflow requirement, not an optional best practice.

That is a market creation narrative. Oregon’s $10,000 fine for 15 fabricated citations — at $500 per hallucination — creates a straightforward ROI calculation for verification software. If a single brief can generate $10,000 in sanctions, the willingness-to-pay for reliable citation checking at the firm level is material.

Conclusion: The Hallucination Tax Is Real, and It’s Growing

The judicial crackdown on AI hallucinations in legal filings is not a story about technophobic judges or a profession resisting change. It is a story about a technology deployed at scale before its failure modes were adequately characterized — and about the institutional response when those failure modes become visible in a context that has zero tolerance for fabricated authority.

For enterprise software practitioners, the pattern is familiar. The AI productivity paradox we have documented across sales organizations is structurally identical: vendors claim transformative capability; actual deployment at scale produces inconsistent results; and organizations that adopted without adequate verification processes absorb the cost of that gap. In legal AI, the cost is measurable in dollars, bar referrals, and client relationships.

The contrarian read is this: the judicial crackdown is the market development event that legal verification SaaS has been waiting for. Every new sanctions order is a case study in the sales deck. Every bar association guidance update is a compliance trigger for firm-wide purchasing decisions. And every instance of a major firm experiencing its third hallucination incident in six months is a buying event for a product category that solves exactly that problem.

The firms and investors who recognize that distinction — between the liability story and the market signal it creates — will be better positioned than those who read the headlines at face value.To explore how your firm’s AI risk exposure maps to current M&A due diligence frameworks, visit DevelopmentCorporate.com or contact us directly.