Making AI Work for Britain: The Book Every Enterprise Tech Leader Should Read — and Fear

Dr. Alan W. Brown is a Professor of Digital Economy at Exeter Business School with 30+ years spanning Carnegie Mellon, IBM Distinguished Engineer, and European CTO roles. He has published five books and 6,300+ cited papers on software engineering and AI transformation. We were colleagues at Sterling Software in the late 1990s.

Making AI Work for Britain is the kind of book that does not arrive often: one where the stated argument is reasonable, the evidence is solid, and the real provocation is buried in a single phrase that the author repeats, almost offhandedly, throughout. That phrase is “pilot purgatory.”

Professor Alan W. Brown of the University of Exeter Business School and Research Director of the Digital Policy Alliance has written a book ostensibly about the United Kingdom’s national AI strategy. Published in 2026 by London Publishing Partnership, it is structured as a policy argument with a clear thesis: the UK is repeating the same structural mistakes in AI that it made in earlier waves of digital transformation — and time is running out to correct them.

But enterprise technology leaders reading this book — whether based in the UK or not — will find something more useful than a national policy prescription. They will find a precise anatomy of why large-scale AI initiatives fail. And that anatomy is uncomfortably universal.

The Argument Brown Is Really Making

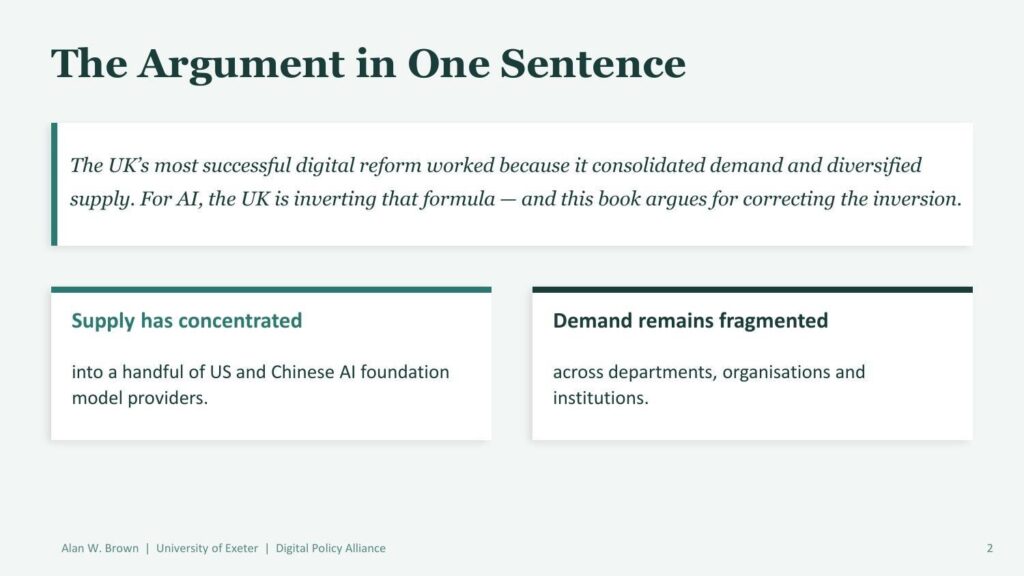

Brown opens with a disarmingly simple structural observation. The UK’s most successful modern digital reform — the Government Digital Service (GDS) — worked because it consolidated demand while simultaneously diversifying supply. One website. Common platforms. Shared standards. And a procurement reform that broke the grip of incumbent systems integrators and opened government contracts to smaller, more agile vendors.

For AI, Brown argues, the UK has inverted that formula. Supply has concentrated into a handful of US and Chinese foundation model providers. Demand has remained fragmented across departments, institutions, and agencies — each running its own pilots, each building its own vendor relationships, each reinventing the same wheel.

Brown’s central thesis: the UK is repeating a structural error — concentrated supply, fragmented demand — that is the opposite of what made GDS successful.

This is a genuinely useful observation, not just for UK policymakers but for any enterprise leader trying to understand why their AI program is producing islands of success rather than systemic change. The GDS analogy gives Brown a concrete benchmark: we have seen what works. The question is whether we are applying those lessons or ignoring them.

💡 KEY INSIGHT FOR ENTERPRISE TECH LEADERS

If your organization’s AI initiatives are scattered across departments with no consolidated demand signal and no diversified vendor architecture, you are running the same structural risk Brown documents at national scale. The GDS lesson applies to enterprises, not just governments.

The Pilot Purgatory Problem

The most valuable section of the book is Brown’s analysis of what he calls “pilot purgatory” — the graveyard where most UK AI initiatives have ended up. The pattern is familiar to anyone who has worked in enterprise AI: a successful proof of concept, strong pilot metrics, enthusiastic executive sponsorship, and then… nothing. The project does not scale. The budget does not materialize. The organizational resistance that the pilot bypassed reasserts itself at full force.

Brown is clear that this is not primarily a technology problem. The technology usually works well enough at pilot scale. The failures are institutional, political, and cultural. Successful AI implementation requires sustained organizational commitment at a level that most organizations have not yet developed the infrastructure to provide.

“The gap between successful pilot and systemic change is where most UK initiatives die — not because the technology fails, but because institutional, political and cultural barriers reassert themselves at scale.” — Alan W. Brown

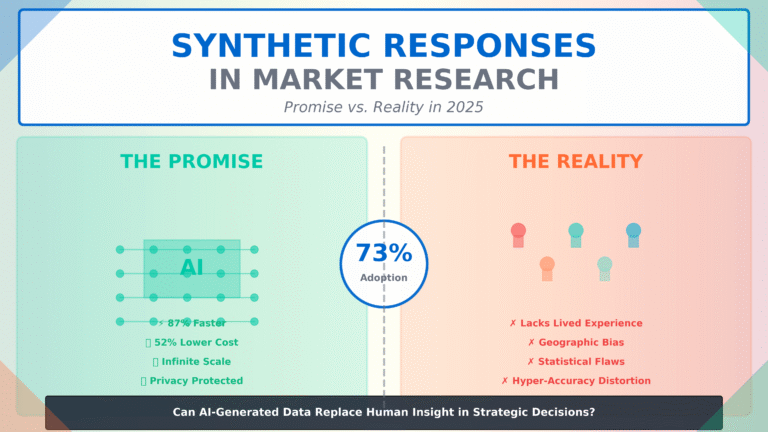

This framing should resonate deeply with enterprise technology leaders. A 2025 MIT study found that 95% of generative AI projects fail to deliver measurable results, despite billions invested. The reasons track almost exactly with Brown’s diagnosis: flawed integration, misaligned priorities, and lack of organizational readiness. The pilots work. The scaling does not. Pilot purgatory is not a UK government problem. It is a structural feature of how large organizations adopt transformative technology.

Digital Sovereignty: The Question That Has No Easy Answer

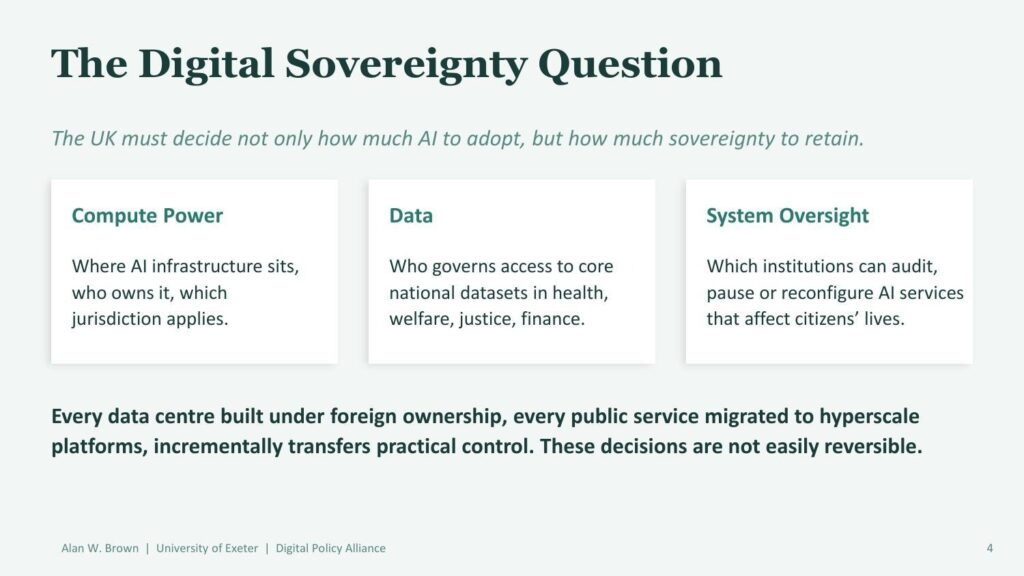

The book’s most uncomfortable chapter examines what Brown calls “the digital sovereignty question.” As the UK has committed to over £250 billion in cross-Atlantic AI infrastructure — including £22 billion from Microsoft, £11 billion from Nvidia, and £5 billion from Google — it faces a set of decisions that are not easily reversible.

Three dimensions of digital sovereignty that the UK must address before infrastructure dependencies become irreversible.

Brown identifies three dimensions of sovereignty at risk: compute power (where the infrastructure sits and who owns it), data (who governs access to core national datasets in health, welfare, finance, and justice), and system oversight (which institutions can audit, pause, or reconfigure AI services that affect citizens’ lives).

The argument is not that foreign investment in AI infrastructure is inherently bad. It is that each decision made under foreign ownership incrementally transfers practical control — and that these transfers accumulate in ways that are politically and technically difficult to reverse. Brown does not frame this as a crisis. He frames it as a set of choices that are being made without adequate deliberation.

Enterprise technology leaders will recognize this dynamic in their own vendor relationships. The structural dependency risks embedded in concentrated AI supply chains — where organizations are locked into a handful of hyperscale providers for model access, compute, and increasingly, data infrastructure — mirror the sovereignty risks Brown describes at national scale. The enterprise equivalent of sovereign infrastructure is multi-vendor architecture. Most organizations are not building it.

Three Strategic Paths — and Why the UK’s Choice Actually Matters for Enterprise Leaders

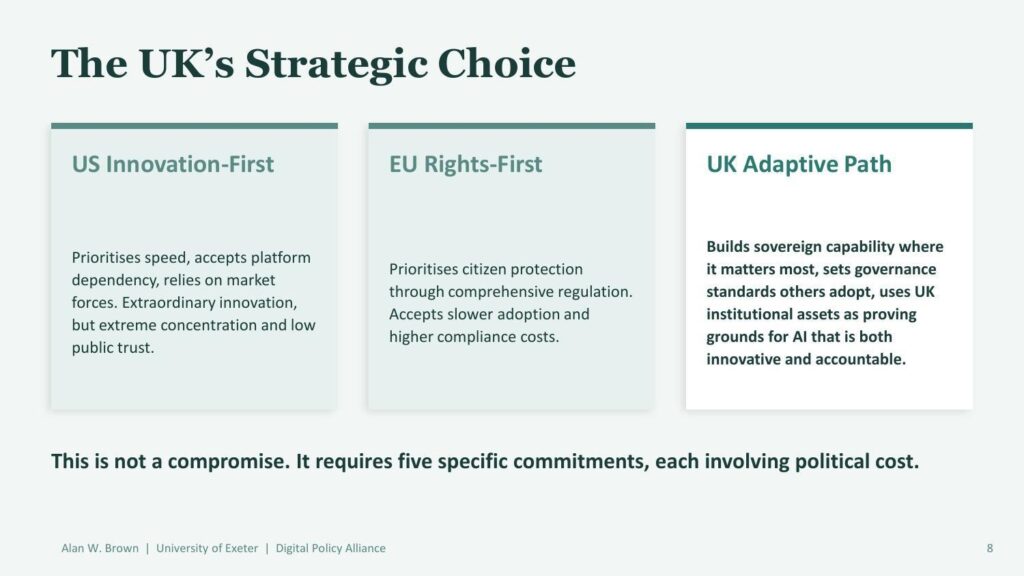

Brown’s comparative framework maps three distinct governance paths with materially different trade-offs for AI adoption speed, sovereign control, and public trust.

One of the book’s most analytically useful contributions is its comparative framework for national AI governance approaches. Brown identifies three models: the US innovation-first approach (prioritizes speed, accepts platform dependency, relies on market forces), the EU rights-first approach (prioritizes citizen protection through comprehensive regulation, accepts slower adoption), and the UK adaptive path (attempts to build sovereign capability where it matters most while setting governance standards others adopt).

What makes this framework valuable for enterprise readers is what it reveals about the regulatory environment their AI investments are operating in. The US model produces extraordinary innovation but extreme concentration — which is exactly what Brown argues has created the supply-side dependency problem. The EU model produces strong governance but compliance costs that can significantly slow deployment.

The UK adaptive path, if executed well, would produce something genuinely valuable: a governance model that other countries and enterprises can adopt as a template. Brown is explicit that this is not a compromise position between the US and EU models — it requires specific institutional commitments, each with political cost. Whether the UK has the political will to make those commitments is the book’s central open question.

🔍 WHAT THIS MEANS FOR ENTERPRISE AI GOVERNANCE

The three-model framework Brown describes is not just a policy analysis. It is a map of the regulatory environments your AI vendor relationships are embedded in. US-origin models carry concentration risk. EU-origin governance carries compliance cost. Understanding which model governs your critical AI infrastructure is a vendor due diligence requirement, not an academic exercise.

Four Implementation Principles That Work Everywhere

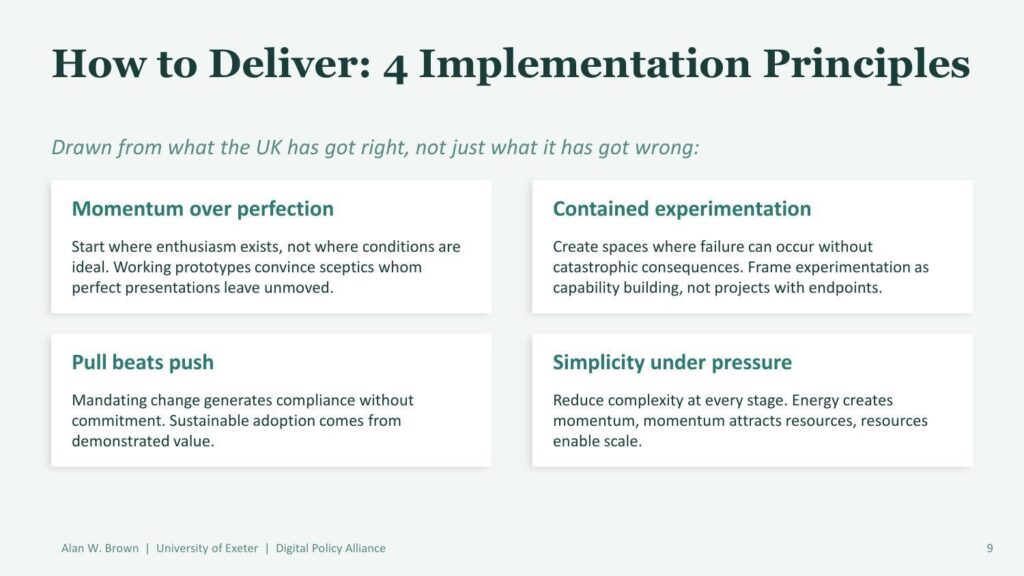

The most immediately practical section of the book is Brown’s articulation of four implementation principles drawn from successful UK digital transformation programs. These are presented as lessons from what actually worked — not just from failures.

Brown’s four implementation principles are drawn from successful cases, not idealized frameworks — and they apply directly to enterprise AI program design.

Momentum Over Perfection

Start where enthusiasm exists, not where conditions are ideal. Working prototypes convince skeptics that polished presentations leave unmoved. This is straightforward advice, but it cuts against the tendency of large organizations to require comprehensive business cases and enterprise-wide rollout plans before approving anything. The organizations that are making real progress in AI are shipping working systems in months, not years.

Contained Experimentation

Create spaces where failure can occur without catastrophic consequences. Frame experimentation as capability building rather than projects with defined endpoints. This principle is directly relevant to the AI hallucination risk problem that is emerging as a significant governance challenge for enterprise AI. Organizations that treat every AI deployment as a production-grade system from day one will either deploy nothing or will face consequential failures in high-stakes contexts. Contained experimentation creates the learning infrastructure that responsible scaling requires.

Pull Beats Push

Mandating change generates compliance without commitment. Sustainable AI adoption comes from demonstrated value that creates internal demand, not from top-down directives. This principle explains why so many enterprise AI mandates produce impressive adoption statistics (everyone has the tool installed) with depressing utility metrics (almost no one is using it to change how they work). The gap between AI tool availability and genuine enterprise adoption is precisely what pull-over-push addresses.

Simplicity Under Pressure

Reduce complexity at every stage. Energy creates momentum. Momentum attracts resources. Resources enable scale. This sounds obvious. But large organizations have a powerful institutional tendency to add complexity — more stakeholders, more governance layers, more requirements — precisely when programs need to be moving fast. Brown’s observation that the GDS succeeded in part because it maintained relentless focus on simplification applies directly to enterprise AI program design.

Six Reform Areas — and the Enterprise Parallels

Brown’s six reform areas map onto enterprise AI governance challenges with surprising precision — particularly procurement, infrastructure, and ethics and accountability.

Brown concludes with a six-part reform agenda covering governance, procurement, infrastructure, skills and training, ethics and accountability, and international positioning. For UK policymakers, these are specific recommendations with named institutional mechanisms and measurable targets. For enterprise technology leaders, they function as a governance checklist.

Three areas deserve specific attention for enterprise readers. On procurement, Brown recommends two-year contract terms with exit-by-design clauses for AI contracts above £500,000. The enterprise equivalent is vendor contract architecture that avoids long-term lock-in — particularly relevant given the concentration of AI infrastructure into a small number of hyperscale providers.

On ethics and accountability, Brown calls for mandatory legitimacy assessments and named human accountability for AI systems affecting consequential decisions. The enterprise parallel is the AI governance framework question that most organizations are still avoiding: who is specifically accountable when an AI system produces a harmful output? Brown’s answer — named individuals with documented accountability — is the right one, even if it is politically uncomfortable.

On skills and training, the target of 50,000 civil servants trained by end of 2026 reflects a recognition that AI literacy is not optional for knowledge workers. The enterprise equivalent is workforce AI capability development at scale — not as a one-time training event, but as a continuing organizational competency.

📋 THE THREE-PHASE ROAD MAP FOR ENTERPRISE AI LEADERS

Brown’s road map (Foundations 2026-27 → Scaling & Testing 2027-28 → Systemic Embedding 2029-30) is not just a government implementation timeline. It is a useful framework for enterprise AI program design. Most organizations are still in Phase 1. Very few have a credible path to Phase 3.

What the Book Gets Right — and What It Leaves Unresolved

Brown is at his strongest when he is being specific. The GDS analysis is genuinely illuminating. The pilot purgatory diagnosis is precise and actionable. The four implementation principles are grounded in documented experience rather than theoretical frameworks. The comparative governance model (US/EU/UK) provides a useful analytical lens for understanding why different environments produce different outcomes.

The book is weaker on the question of how the AI hallucination and reliability problem intersects with public-sector deployment. Brown acknowledges AI limitations, but does not engage deeply with the empirical evidence on hallucination rates in production conditions — which, as Stanford’s AI Index documents, remain far higher in complex real-world tasks than vendor benchmarks suggest. For a book focused on implementation, this is a notable gap.

The digital sovereignty argument is compelling but incomplete. Brown is right that infrastructure decisions made today are not easily reversible. But the analysis would benefit from a deeper engagement with the specific contractual and architectural mechanisms that preserve sovereignty within foreign-owned infrastructure — because the political and economic reality is that the UK is not going to build purely domestic AI infrastructure, and neither are most enterprises.

These are constructive criticisms. The book accomplishes what it sets out to do: it provides a coherent, evidence-based argument for a specific set of institutional reforms, grounded in historical analysis of what has and has not worked in UK digital transformation. That is more than most policy books manage.

The Verdict: Required Reading for Enterprise AI Leaders

Making AI Work for Britain is not primarily a book about the United Kingdom. It is a book about how large, complex organizations fail to convert successful AI pilots into systemic capability — and what institutional architecture is required to break that pattern.

The UK government is the case study. But the diagnosis applies to any enterprise operating at scale. The pilot purgatory problem is real, the GDS lesson is transferable, and the four implementation principles are immediately applicable. If your organization is running impressive AI pilots that are not scaling into operational change, this book will explain exactly why — and what to do about it.

The strategic choice Brown describes — between speed with dependency, protection with compliance cost, and a harder middle path that requires genuine institutional commitment — is not just a choice for governments. It is the choice that every enterprise CTO and CPO is navigating right now. Brown’s framework makes that choice clearer.Read it alongside empirical evidence on enterprise AI adoption economics and the LLM training data blind spots reshaping vendor visibility. The picture that emerges is sobering and clarifying in equal measure: the gap between AI aspiration and AI implementation is not narrowing on its own. Closing it requires exactly the kind of institutional architecture Brown is calling for.