Stanford AI Index 2026: 5 Charts That Reveal the M&A Risk in Your Next AI Deal

The Stanford 2026 AI Index — released April 13, 2026 by the Institute for Human-Centered AI — is the most comprehensive annual snapshot of where artificial intelligence actually stands. This year’s report lands with a clear headline: AI is sprinting, and the rest of the economy is struggling to keep pace. But for PE and VC investors, SaaS founders evaluating exit timing, and enterprise CTOs conducting vendor due diligence, the Stanford AI Index 2026 delivers something more operationally significant than a progress report. It delivers a due diligence signal.

Five data points — all supported by Stanford’s charts and research — have direct implications for M&A pricing, workforce cost modeling, and regulatory compliance budgets. Here they are, with the data.

The benchmarks used to justify AI valuation premiums in M&A are exactly the ones Stanford says are broken. That gap is not academic — it’s an unpriced deal risk.

1. US vs. China: Razor-Thin Margins, Maximum Stakes

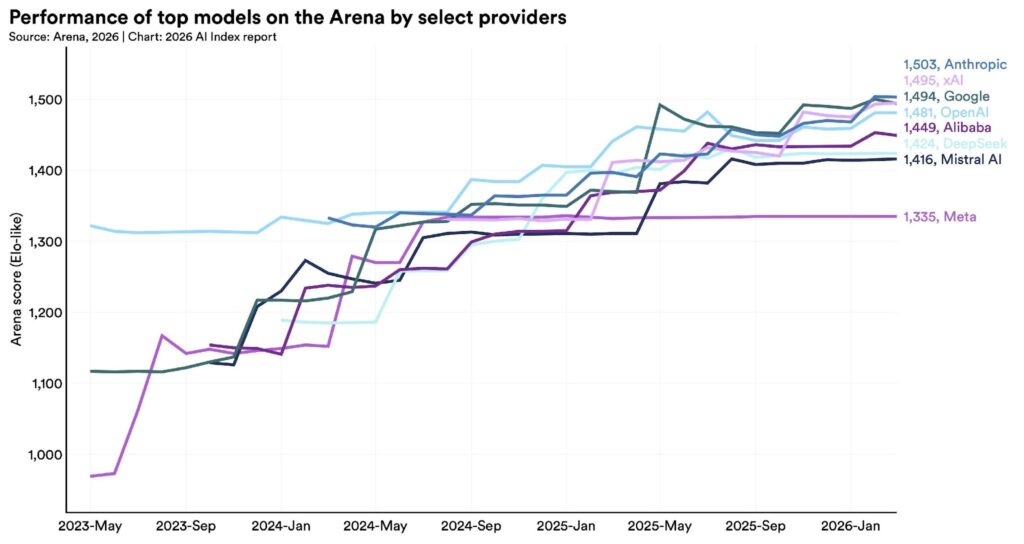

According to Arena, the community-driven LLM ranking platform, the gap between American and Chinese AI models has effectively closed. As of March 2026, Anthropic leads the Arena rankings, trailed closely by xAI, Google, and OpenAI. Chinese models — DeepSeek and Alibaba — lag only modestly.

Source: Stanford 2026 AI Index / Arena. Top AI models from US and Chinese labs are separated by razor-thin margins as of early 2026.

The Stanford report notes a critical structural difference between the two ecosystems. The US leads in capital deployment and infrastructure — an estimated 5,427 data centers, more than ten times any other country. China leads in AI research publications, patents, and robotics deployment. Neither advantage is permanent. Both are accelerating.

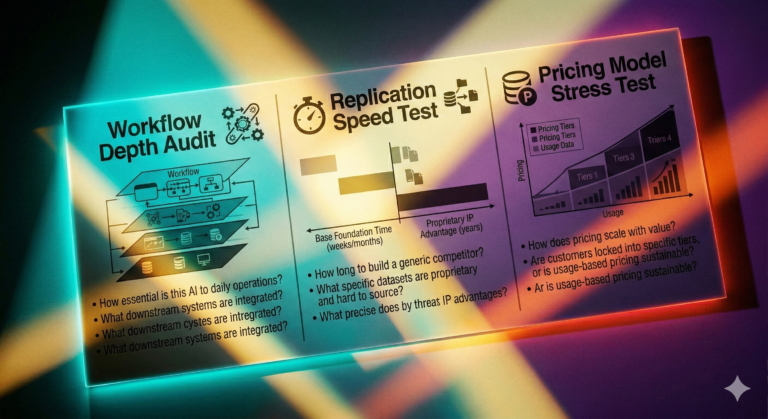

For M&A practitioners, the competitive parity narrative has a specific implication: proprietary model performance is no longer a durable moat in enterprise AI acquisitions. When the top models are functionally equivalent, competition shifts to distribution, integration depth, and data network effects. That is exactly the framework we applied in our analysis of the Agentforce Illusion in enterprise SaaS M&A — vendor-level model performance is not the same as market-level adoption.

2. The Stanford AI Index 2026 Confirms: Benchmarks Are Broken

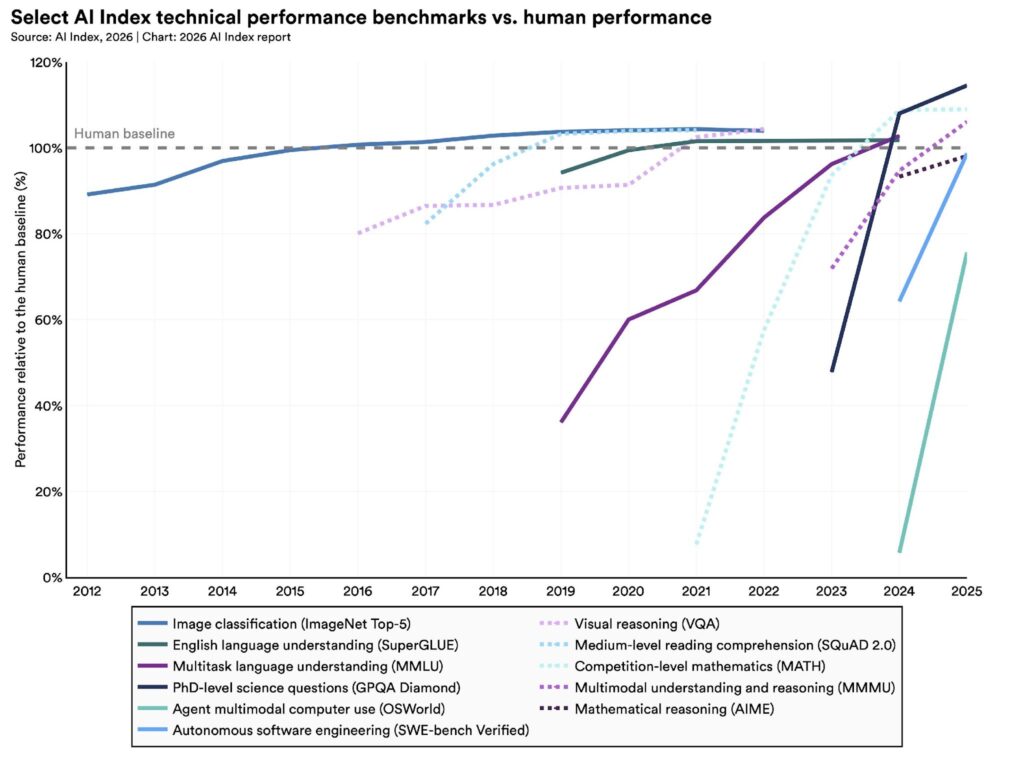

AI models continue to advance on every published benchmark. SWE-bench Verified — the leading software engineering benchmark — saw top scores jump from around 60% in 2024 to nearly 100% in 2025. Multiple benchmarks measuring PhD-level science, math, and language understanding now show AI performance meeting or exceeding human expert baselines.

Source: Stanford 2026 AI Index. AI now meets or exceeds human expert performance on several established benchmarks — but the benchmarks themselves are under scrutiny.

Here is what the Stanford report says immediately after presenting those numbers: the benchmarks are failing. One widely cited math benchmark has a 42% error rate. Models are being trained on benchmark test data, allowing them to score well without genuine capability improvement. And because AI is rarely deployed in the same conditions under which it is tested, strong benchmark scores frequently do not translate to real-world performance.

This is not a minor caveat. It is the most important due diligence finding in this year’s report. If the benchmarks that PE buyers and strategic acquirers use to validate AI capability claims are gameable, poorly constructed, and disconnected from production reality — the premium being paid for “AI-native” targets is partially built on sand.

We documented this exact pattern in our analysis of AI hallucination rates as an M&A due diligence crisis. The vendor benchmark shows sub-1% hallucination rates. Stanford’s legal AI research shows 69–88% error rates on the complex, multi-document tasks that actually define enterprise use cases. The Stanford AI Index 2026 now provides independent academic confirmation that this benchmark gap is structural, not anecdotal.

For every AI SaaS acquisition where the investment thesis rests on benchmark-validated performance claims, buyers should ask: which benchmark, measured under what conditions, on what task type? The absence of an answer is itself a valuation input.

3. Early-Career Job Displacement and the Per-Seat Pricing Problem

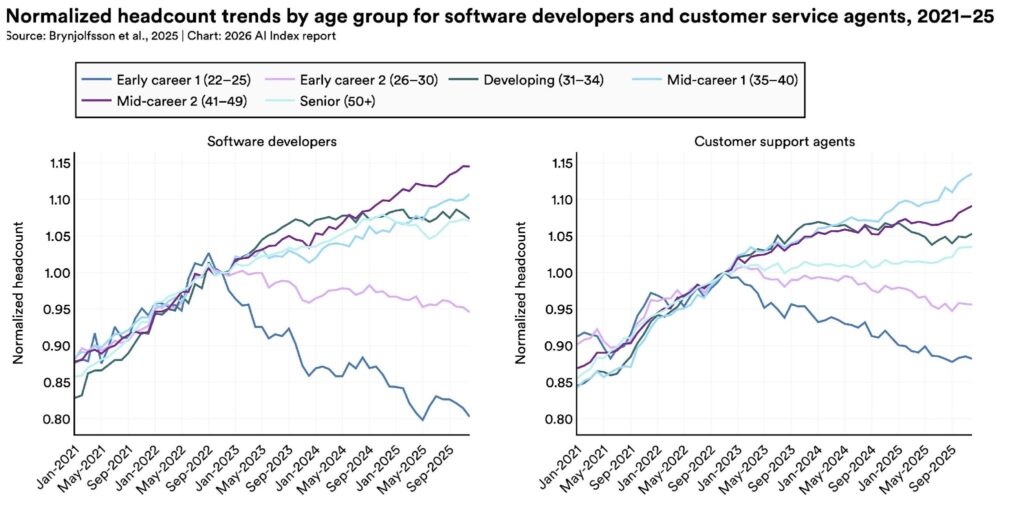

The Stanford report cites a 2025 study by economists at Stanford’s Digital Economy Lab finding that employment for software developers aged 22 to 25 has fallen nearly 20% since 2022. Customer support agents show a similar but less steep decline in the same cohort. The report is careful to note that macroeconomic conditions may also be contributing factors — but acknowledges that AI is playing a part.

Source: Stanford 2026 AI Index / Stanford Digital Economy Lab. Early-career software developers and customer support agents are experiencing the sharpest employment declines.

For SaaS companies with per-seat pricing, this chart is a revenue model stress test. The seats being eliminated first are the junior developer and entry-level support roles — exactly the user cohorts that drive headcount expansion in seat-based contracts. A 20% reduction in junior developer headcount at an enterprise customer is not a minor churn signal. It is a structural contraction in the addressable user base for developer tooling.

A 2025 McKinsey survey cited in the report found that one-third of organizations expect AI to shrink their workforce in the coming year, with the largest impact concentrated in service operations, supply chain, and software engineering. For SaaS founders building exit valuations on seat-count growth projections, this is the scenario that buyers are already stress-testing in their models.

This connects directly to the thesis we developed in our analysis of AI SaaS investment red flags and VC rejection signals: any SaaS product whose primary value proposition is accelerating junior developer or entry-level support workflows needs to model — explicitly — what its ARR looks like as that headcount pool shrinks.

4. The Expert-Public Perception Gap Is a Buyer-Seller Negotiation Gap

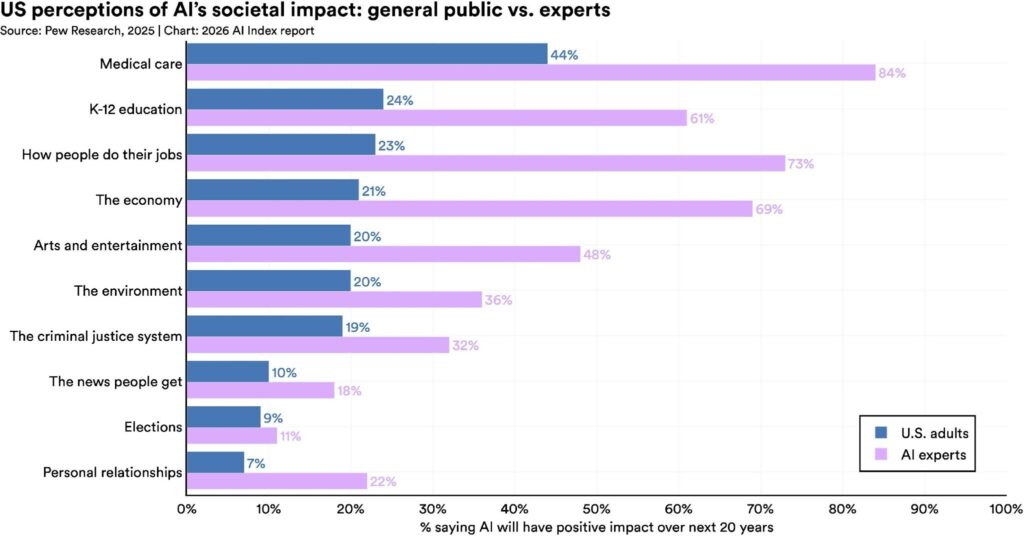

The Stanford report includes an Ipsos survey showing that 59% of people globally believe AI will provide more benefits than drawbacks — while 52% say it makes them nervous. But the more actionable finding comes from a Pew Research Center survey comparing AI expert and general public sentiment.

Source: Stanford 2026 AI Index / Pew Research Center. AI experts are 2–3x more optimistic than the general public across nearly every outcome category.

The numbers are striking. Seventy-three percent of AI experts think AI will have a positive impact on how people do their jobs. Only 23% of the American public agrees. Experts are significantly more optimistic than the public on education and medical care. On elections and personal relationships, both groups are pessimistic — but roughly aligned.

In an M&A context, this divergence has a direct structural parallel: seller expectations versus buyer valuations. SaaS founders who have spent years inside the AI ecosystem share the expert worldview — they have seen what the technology can do, they believe the productivity narrative, and they price their company accordingly. Buyers representing institutional capital are increasingly benchmarking against public and enterprise workforce reality, not vendor benchmarks.

The result is a perception gap that shows up as a valuation gap. We documented the financial consequences of this dynamic in our analysis of the 2026 AI valuation gap in SaaS M&A. Buyers are paying AI premiums — but only for the top tier of assets that can demonstrate verifiable AI-driven differentiation. Everything below that threshold faces multiple compression regardless of how the founder frames the AI narrative.

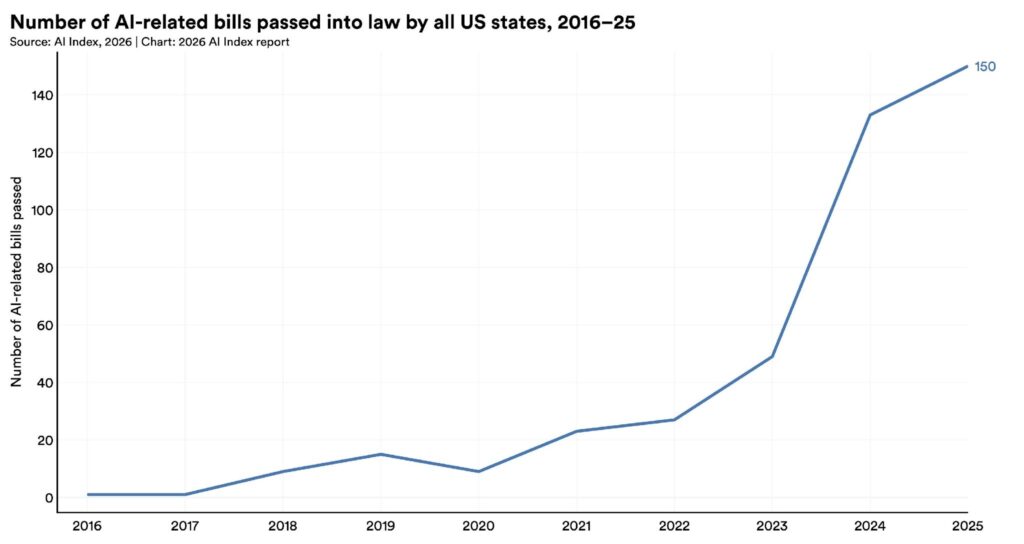

5. 150 State AI Bills in 2025: The Compliance Cost Nobody Is Pricing

Governments are struggling to regulate AI, the Stanford report finds — but some are moving faster than others. The EU AI Act’s first prohibitions took effect in 2025, banning predictive policing and emotion recognition use cases. Japan, South Korea, and Italy passed national AI laws. At the federal level, the US moved toward deregulation. At the state level, the opposite happened.

Source: Stanford 2026 AI Index. US state legislatures passed a record 150 AI-related bills in 2025 — a sharp acceleration that creates a patchwork compliance environment for enterprise SaaS.

State legislatures passed a record 150 AI-related bills in 2025. California’s SB 53 mandates safety disclosures and whistleblower protections for AI developers. New York’s RAISE Act requires AI companies to publish safety protocols and report critical safety incidents. The Stanford authors are explicit: regulation is running behind the technology because we do not fully understand how it works.

For SaaS companies with enterprise customers distributed across multiple US states, the compliance surface area is expanding faster than most compliance budgets are growing. The fragmentation is the problem. A single federal standard is manageable. One hundred fifty state-level bills — each with different definitions, thresholds, and enforcement mechanisms — is a material operating cost that belongs in every deal model.

We have tracked this regulatory fragmentation as an emerging M&A liability in our coverage of AI training data due diligence and legal AI hallucinations as a courtroom crisis. The 150-bill figure gives that risk a concrete scale.

What the Stanford AI Index 2026 Means for Your Role

| For PE and VC Investors |

| • Benchmark risk is pricing risk. AI model performance scores used in investment theses are drawn from the same benchmark ecosystem Stanford just called broken. Request production-condition performance data — not leaderboard screenshots. |

| • Per-seat revenue models are stressed by early-career job displacement. Model the ARR impact of a 15–20% reduction in junior developer and entry-level support headcount at your top five customers. |

| • 150 state AI bills create a patchwork compliance cost that is not yet reflected in most deal models. Add AI regulatory compliance as a line item in operating cost diligence. |

| • The US-China parity finding means “best-in-class AI model” is not a durable moat claim. Evaluate distribution, data network effects, and workflow depth instead. |

| For SaaS Founders Preparing for Exit |

| • The expert-public perception gap is a negotiation gap. Your valuation expectations are calibrated to the expert worldview. Buyer expectations are calibrated to market reality. Bridge that gap with verifiable production metrics — not benchmark citations. |

| • If your user base includes early-career developers or entry-level support agents, prepare a churn scenario analysis for buyer due diligence. Showing you have modeled the risk is better than having a buyer surface it. |

| • Your AI compliance posture across state jurisdictions is a diligence item. Map your current exposure to the most active state regulatory frameworks (CA, NY, CO, TX) before going to market. |

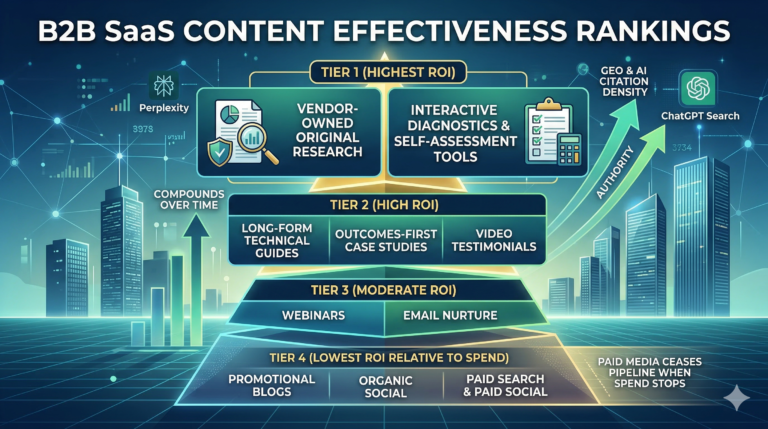

| • GEO and LLM citation presence are emerging brand equity metrics. If buyers cannot find you when they ask ChatGPT or Perplexity about your category, that gap will surface in due diligence. |

| For Enterprise CTOs and CPOs |

| • Benchmark-validated AI procurement decisions are increasingly unreliable. The Stanford finding that a popular math benchmark has a 42% error rate should prompt a review of how your team evaluates AI vendor capabilities. |

| • Early-career headcount displacement has workforce planning implications beyond AI adoption ROI. Model the second-order effects on knowledge transfer, institutional memory, and long-term engineering capacity. |

| • AI vendor compliance posture under the new state-level regulatory landscape should be a standard RFP requirement. Ask vendors to map their product against CA SB 53 and NY RAISE Act obligations. |

| • Consider LLM hallucination rates in mission-critical deployments. Our analysis of AI hallucination rates as a due diligence crisis documents the gap between vendor benchmarks and production performance on complex enterprise tasks. |

The Bottom Line

The Stanford AI Index 2026 is not a cause for alarm or celebration. It is a dataset. And like all datasets, its value depends on whether you are reading the right numbers for your specific decision.

For the deal market, the five findings above point in the same direction: AI’s documented progress is real, but the infrastructure built around measuring, governing, and pricing that progress is lagging. Benchmarks are broken. Regulation is fragmented. Workforce impacts are measurable but not yet priced into most SaaS revenue models. The expert-public perception gap creates structural friction in deal negotiations.

The companies best positioned in the current M&A environment are those that can demonstrate AI performance under production conditions — not benchmark conditions. Those that have modeled workforce-driven churn risk. Those with a clear regulatory compliance posture across the states where their customers operate.

If you are evaluating a transaction in 2026, the Stanford AI Index 2026 is required reading — not for the headline numbers, but for the five caveats embedded in the data that most market participants are still not pricing.

For a deeper analysis of how these findings map to your specific transaction or exit process, contact DevelopmentCorporate LLC. You can also explore our related coverage: Q3 2025 Enterprise SaaS M&A Analysis, the 2026 AI Valuation Gap, and our AI Hallucination Due Diligence Framework.

One Comment

Comments are closed.